If your SaaS company blog is built on hundreds of “best X” listicles with your own product ranked first, Google just sent you a message, without saying a word.

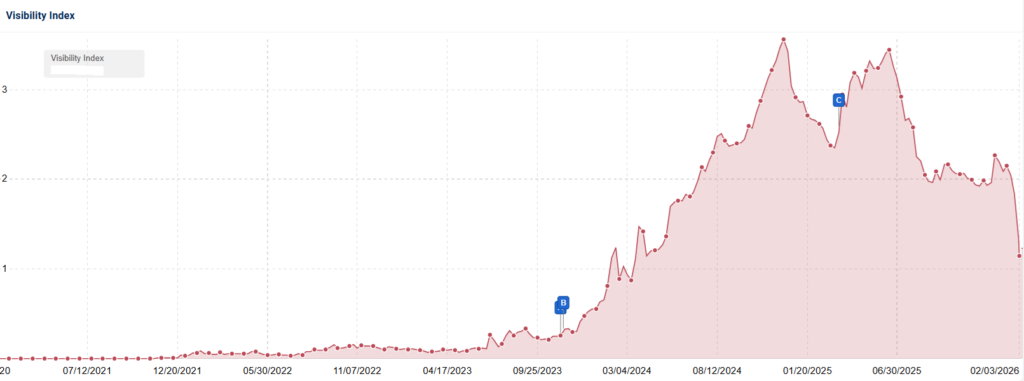

In late January 2026, sites running this exact playbook lost 29% to 49% of their organic visibility. Entire blog sections that once drove thousands of monthly visits went quiet overnight. The january 2026 unconfirmed Google update was never announced, never confirmed, never acknowledged.

But Lily Ray’s investigation of 11 affected sites, community-wide traffic collapse reports, and continued volatility through February tell a clear story: this was targeted, surgical, and aimed at a content pattern that thousands of SaaS and B2B marketing blogs share.

And the damage goes deeper than your Google Search Console dashboard can show. Sites that lost organic rankings also lost their presence in AI Overviews and AI-search citation interfaces, a compounding visibility hit that most analyses of this update miss entirely.

I’ve spent the past several weeks tracking the fallout across affected sites, SERP data, and industry analyses. This guide breaks down what the January 21 update actually targeted, who got hit hardest, how to diagnose your exposure, and what to do if your traffic dropped.

Lily Ray’s Investigation: 11 Sites, One Pattern

Lily Ray’s investigation provides the clearest picture available and the most detailed analysis anyone has published on this update. She examined 11 SaaS and marketing sites that experienced steep visibility drops beginning around late January 2026, cross-referencing the timing with the january 21 google update volatility spike and examining content structure, URL patterns, and ranking behavior across each site.

The pattern she found was unmistakable.

The Content Pattern

For several of the affected sites, 70% to 90% of their total organic visibility was concentrated in blog, resources, and tutorials folders. These folders were filled with “best X” listicles, produced at staggering scale. Individual sites had 191, 228, 267, and 340+ “best” posts each.

The defining characteristics across these sites:

- The publisher’s own product was consistently ranked #1 in every single listicle

- Posts followed nearly identical templates across the entire library, same structure, same conclusions, same product winning every comparison

- The predetermined conclusion was the defining feature: no matter the query, no matter the category, the company’s own tool always came out on top

- Little to no explanation of methodology for how products were chosen and ranked

- No disclosure of the obvious conflict of interest

The Damage

Visibility losses across the 11 sites ranged from approximately 29% to 49%. Specific figures Ray documented include losses of roughly 49%, 43%, 42%, 38%, 34%, and 29% for different sites across late January to early February.

Source: Lily Ray

A critical nuance: these losses were concentrated in blog sections, not sitewide. Core product pages on the same domains often remained stable. Ray called the update a “bloodbath” on X, and the data supports that characterization.

Sites didn’t bounce back after the initial drop. They continued declining through February with no quick rebound, suggesting a sustained quality reassessment rather than a temporary fluctuation.

How to Check Your Own Exposure

Ray’s diagnostic query cuts straight to the point: site:yourdomain.com/blog intitle:”best” “1. [your brand]”. If this query returns dozens or hundreds of results, you’re running the exact pattern Google targeted. Follow up by checking how many of those posts rank your own product first.

If most do, you match the profile of the hardest-hit sites. Volume is the multiplier. Five posts matching this pattern won’t trigger issues. Three hundred will.

Why This Matters Beyond 11 Sites

This content pattern is endemic in SaaS, B2B, and marketing technology. Thousands of companies built organic strategies around this exact playbook over the past several years. Ray’s 11 sites are case studies, not outliers.

The crackdown on self-promotional listicles wasn’t random. It targeted a specific, identifiable content pattern that an entire industry vertical shares. If you’re in SaaS or B2B and your blog strategy centers on “best X” content, this investigation is about you.

The “Gray Area” of Self-Promotional Listicles

Where exactly does legitimate content marketing end and manipulative ranking behavior begin? Lily Ray’s framing is precise: self-promotional listicles are a “gray area of SEO.” They’re not outright malicious, and they’re not spam in the traditional sense. But they’re clearly designed more for search manipulation than for unbiased, evidence-backed recommendations. That gray area is exactly what the January update appears to have collapsed.

Legitimate Reviews vs. Self-Promotion

Legitimate review and comparison content looks like the Wirecutter model:

- An editorial wall separates reviews from business interests

- Products are genuinely tested, and methodology is transparent

- Competitors can and do outrank the reviewer’s own product when the evidence supports it

Self-promotional listicles look different:

- The publisher’s product is always #1

- Justifications for rankings are thin

- Templates replicate at scale with no scenario where the publisher’s product loses

- No disclosure of the obvious conflict of interest

The tell is volume combined with uniformity. A company blog with 5 comparison posts isn’t raising red flags. A company blog with 340 “best X” posts where the same product wins every time is a pattern Google’s systems can now detect. The intent behind the content becomes obvious at scale, even if any individual post could pass as legitimate.

The Spectrum of Risk

Not all comparison content carries equal risk:

- Low risk: Independent review sites with genuine editorial separation, transparent testing methodology, and honest results. These sites maintained their positions through January, according to Stan Ventures’ analysis.

- Medium risk: Company blogs with occasional comparison posts that disclose relationships, include real pros and cons, and don’t always rank their product first. This category saw mixed results depending on volume and template similarity.

- High risk: Hundreds of templated “best X” listicles with the publisher’s product always winning, no evidence of testing, and scaling via AI generation. This is what the unconfirmed google update 2026 targeted directly.

This is a gradient, not a binary switch. Google’s systems appear to be getting better at detecting where content falls on this spectrum.

Why Google Is Acting Now

Glenn Gabe’s analysis suggests Google may be moving toward continuous quality adjustments rather than discrete labeled updates. The reviews system and helpful content signals appear to be evolving to detect self-serving ranking patterns at scale. As AI makes mass-producing listicle content cheaper and faster, Google’s incentive to catch it accelerates.

If you can’t tell whether your listicles are editorial or promotional, Google’s systems have already decided for you.

Who Got Hit: Content Types and Verticals Most Affected

The hardest part of an unconfirmed update is figuring out if you’re in the blast radius. After tracking the fallout for weeks, I can map the specific content types and verticals most affected by the google reviews system update 2026 activity.

Content Types Most Affected

- Self-promotional “best X” listicles: The primary target. Sites running 100+ templated posts with their own product always ranked #1 saw visibility losses between 29% and 49%. This is the content type Lily Ray’s investigation documented most thoroughly, and it represents the clearest pattern in the data.

- Thin review and roundup content: Review pages built from manufacturer copy and public specs, with minimal evidence of first-hand use or unique insights, lost ground across multiple verticals. Glenn Gabe tied these losses to content that fails to demonstrate genuine experience and value.

- AI-generated content clusters: Pages targeting long-tail commercial queries with highly similar intros, structures, and sections across dozens or hundreds of pages. Artificial freshness tactics (aggressive date updates without meaningful content changes) didn’t protect them.

- Programmatic informational pages: Mass-produced “who/what/is/how” pages targeting informational queries with minimal original value, designed to capture traffic rather than serve readers.

Verticals Most Affected

- SaaS and B2B marketing tech: The epicenter. Companies that built entire organic strategies around blog-driven listicles felt the full force of the update. For many, blog content represented 70% to 90% of total organic visibility, meaning blog-specific losses translated into catastrophic overall traffic declines.

- Publishers and content sites: The most extreme numbers. Traffic reductions hit as high as 90%, with AdSense revenue declining 50% to 87%. Some sites were reduced to 30% of normal traffic levels, according to community reports documented by YNOT. Webmasters also reported reduced visibility for new content on Discover and Google News, suggesting a broader trust signal decline.

- iGaming and other sensitive verticals: Sustained volatility across January and February, though with less clear ties to the self-promotional listicle pattern. iGaming Today documented ongoing SERP instability that may reflect broader quality recalibration alongside the listicle-targeted hit.

The Selective Nature of the Hit

Stan Ventures’ analysis highlights the most important structural insight: this update was selective, not sitewide. Blog and resources sections experienced 30% to 50% visibility losses. Core product and service pages on the same domains often remained completely stable.

Google appears to be refining trust signals at the content-section level, not applying domain-wide penalties. Your product pages may perform normally even while your blog craters. That distinction matters enormously for how you prioritize recovery efforts.

Timeline: December Core Update, January Aftershocks, and the January 21 Spike

Three distinct events unfolded between December 2025 and late January 2026, and confusing them leads to the wrong diagnosis and the wrong recovery plan.

The December 2025 Core Update (December 11-29)

Google’s December 2025 core update ran an 18-day rollout from December 11 to December 29. This was the last confirmed, officially announced update before January’s chaos began, a broad quality recalibration affecting multiple verticals that set the baseline for everything that followed.

If your traffic started declining during this window, you were hit by a confirmed core update. That’s a different recovery path than what I’m describing here.

January 6-7 Aftershocks

The first week of January brought another wave of ranking movement, widely interpreted as settling behavior from the December core update finishing its rollout. Auto-Post’s analysis supports this interpretation, core updates re-weight signals in waves rather than flipping a single switch.

Key signals from this period:

- Some publishers reported traffic dropping to 30% of normal levels starting December 13, with conditions worsening around January 6

- YNOT documented forum reports of AdSense revenue declining 50% to 87%

- The targeting pattern matched December’s core update, same verticals and content types continued shifting, with no new categories of sites appearing in loss reports

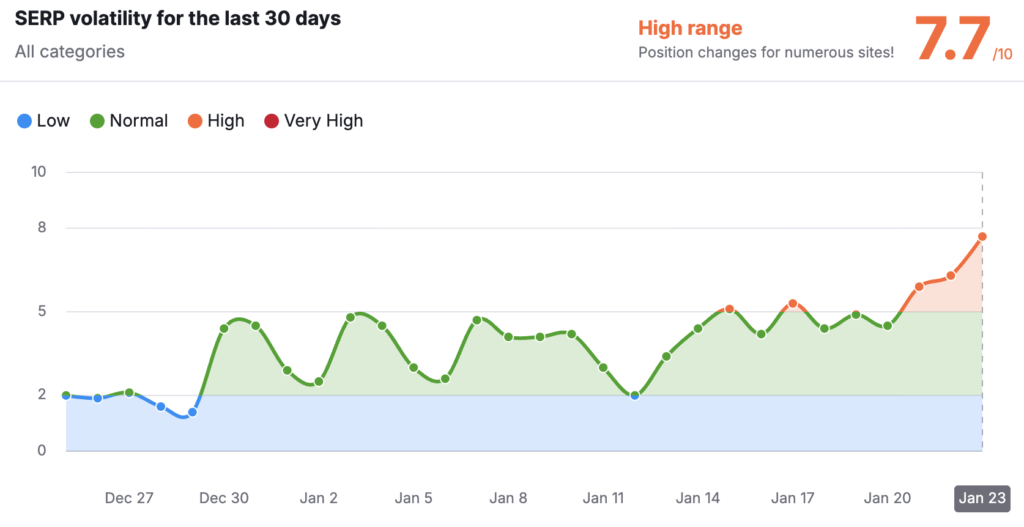

January 21: The Real Story

Then January 21 hit, and the data looked completely different:

- Semrush Sensor spiked above 7

- MozCast reached 116°F

- SimilarWeb and Wincher both confirmed the volatility independently

- Blog and content sections lost 30% to 50% of their visibility while core product pages on the same domains held steady

Source: Search Engine Roundtable

That selective behavior doesn’t match aftershock settling. It matches a new quality adjustment with its own targeting logic. Google made no confirmation. The evidence is entirely community-documented, tracked through SERP tools and individual site reporting.

This wasn’t a one-day blip. SEO-Kreativ documented 9 subsequent volatility waves across 3 fronts through February 2026, with the google algorithm update january 2026 continuing to reshape rankings weeks after the initial spike.

How to Diagnose If You Were Hit

You can determine your exposure to this update in about 15 minutes. Here’s the diagnostic process, moving from broad signals to specific pattern matching.

Step 1: Check Google Search Console Timing

Pull up your GSC performance report and look for traffic drops aligned with January 21 through 25, 2026. This is the signature window for the unconfirmed update. If your traffic dropped in early January (January 6-7), that’s more likely December core update aftershocks, which require a different response.

Step 2: Segment by URL Path

Compare the performance of your blog, resources, and tutorials folders against your core product and service pages. Use GSC’s page filter to isolate URL paths. If blog sections dropped 30% or more while product pages held steady, you match the January 21 pattern exactly.

Step 3: Run the Diagnostic Query

Use Lily Ray’s query: site:yourdomain.com/blog intitle:”best”. Count the results. If you see dozens or hundreds of “best X” posts, you’re running the affected content pattern. A more targeted variant adds your brand name: site:yourdomain.com/blog intitle:”best” “1. [Your Brand]”.

Step 4: Assess the Self-Promotional Ratio

What percentage of your listicles place your own product first? If it’s 80% or higher, your content fits the high-risk profile identified in Ray’s investigation. Also check for template similarity, uniformity at scale is one of the strongest risk signals.

Step 5: Check AI Citation Status

Search for your brand alongside key queries in Google’s AI Overview. Compare current AI citations to pre-January levels if you have baseline data. If you’ve lost AI citations alongside organic rankings, the total impact is larger than GSC alone reveals. Factor both channels into your recovery baseline.

The Hidden Cost: AI Search Citations and GEO Visibility

If you’ve confirmed you were hit, there’s a second layer of damage most analyses miss entirely. Sites that lost organic rankings in January also lost their presence in AI Overviews and AI-search citation interfaces. Your traffic charts are understating the real impact.

How Organic Rankings Feed AI Citations

Lily Ray and Glenn Gabe’s research shows a direct relationship: as Google reduces a site’s organic visibility, its content gets cited less in AI overviews, featured snippets, and other AI-powered surfaces. Google’s AI systems draw from the same trust and quality signals that power organic rankings. When those signals drop, AI citation frequency drops with them.

The Compounding Effect

A site that lost 40% organic visibility didn’t just lose 40% of its blue-link traffic. It also lost AI Overview appearances, which are increasingly where users get answers without clicking through to any website. For SaaS companies whose “best X” listicles were generating AI citations and brand visibility even without clicks, the january 2026 unconfirmed Google update created a double hit.

The total visibility loss is larger than any single analytics tool shows:

- Google Search Console captures organic ranking changes but not AI-generated answer panel disappearances

- Third-party tools that track AI citations tell a more complete story, and for affected sites, that story is worse than the organic data alone suggests

- SaaS companies relied on listicles not just for organic clicks but for brand presence in AI-powered answers, and losing that citation channel removes a marketing layer most teams weren’t even tracking

Why This Changes Recovery Planning

Traditional recovery strategies focused exclusively on regaining organic rankings. That’s no longer sufficient. You now need to monitor both organic rankings and AI-search citations as separate recovery metrics. A site that regains organic positions but doesn’t recover AI citations has only partially recovered.

This is the Generative Engine Optimization dimension that no other analysis of this update has covered. If you’re only checking Google Search Console for recovery signals, you’re missing half the picture.

Recovery Playbook: Rebuilding After the January 2026 Update

Recovery from quality-system adjustments takes months, not days. Sites hit by the google algorithm update january 2026 continued declining through February with no quick rebounds. But the path forward is clear, and the companies that start now will recover first.

Priority 1: Stop the Bleeding

Pause publishing new self-promotional listicles immediately. Adding more of the same content that triggered losses won’t help and may accelerate the decline. Don’t make sweeping sitewide changes during a settling period, though, Auto-Post’s analysis warns that aggressive changes during noisy data introduce new variables when clarity matters most.

Identify your worst offenders: the posts with the thinnest justification for ranking your product first. Flag them for revision or removal, but don’t rush into mass deletions before you have a plan.

Priority 2: Rebuild Content with Genuine Editorial Value

This is the core of recovery and the hardest part. The goal is content that would pass editorial review at an independent publication. If a journalist at a trade magazine would look at your “best CRM tools” post and say “this is an ad,” Google’s quality systems are likely reaching the same conclusion.

What rebuilding looks like in practice:

- Transform listicles into genuine guides with transparent methodology for how products are chosen and ranked

- Disclose conflicts of interest clearly, if you’re reviewing products while selling a competing product, say so explicitly

- Allow competitors to outrank your own product where the evidence supports it

- Add first-person evidence: original screenshots, test results, real usage data

- Include genuine pros and cons based on actual usage, including real weaknesses of your own product

Priority 3: Tame AI Content Production

If you’re using AI to mass-produce pages, stop. Use AI to assist research and drafting, but add rigorous human editorial review to every piece before publishing. Every page should contain insights, observations, or analysis that only a human with genuine product experience could provide.

Trim redundant or low-value pages rather than trying to save everything. A page that exists only because a keyword tool said the search volume justified it is now a liability.

Priority 4: Consolidate and Prune

If you have 300+ “best X” posts, you don’t need 300+ “best X” posts. Thirty genuinely useful comparison guides beat 300 templated listicles, both for rankings and for readers who actually need help choosing a product.

Consolidation priorities:

- Merge overlapping topics into comprehensive, genuinely useful guides

- Redirect pruned URLs to consolidated resources

- Group related posts by user intent rather than keyword variation, “best project management tools,” “best project management software,” and “top project management apps” don’t need three separate pages

Priority 5: Monitor Recovery Across Both Channels

Track organic rankings recovery in Google Search Console. Monitor AI-search citation recovery separately, these are independent signals that may recover at different rates. Align your revised content with Google’s E-E-A-T guidance and reviews system documentation. Recovery typically coincides with major reprocessing events, so patience is essential.

What This Means Going Forward

The january 2026 unconfirmed Google update signals a broader shift that matters beyond any single ranking fluctuation. Google appears to be moving away from discrete, labeled updates toward continuous quality recalibration across multiple systems simultaneously, helpful content, reviews, and spam classifiers all evolving in parallel.

For publishers, this means three things:

- Less transparency, more accountability. Google isn’t going to announce every quality adjustment. The era of preparing for named updates is giving way to an era where your content either meets quality standards continuously or it doesn’t.

- Gray-area tactics have a shorter shelf life than ever. Self-promotional listicles worked for years precisely because they blended legitimate formatting with self-serving rankings. Google’s systems are now catching up to these patterns at scale, and as AI makes mass-producing this content even cheaper, the detection will only accelerate.

- AI search makes quality signals compound. Losing organic trust doesn’t just cost you blue-link traffic anymore. It costs you AI Overview citations, GEO visibility, and brand presence in AI-powered answers. The total cost of running low-quality content at scale is now significantly higher than it was even a year ago.

The companies that recover fastest from the unconfirmed google update 2026 will be the ones that stop treating their blog as a ranking machine and start treating it as a genuine editorial resource. That shift is uncomfortable, but it’s exactly what Google is forcing, and this time, the penalty for ignoring it extends across every surface where your content used to appear.

FAQ

Was the January 21 update confirmed by Google?

No. Google has not announced or confirmed any update around January 21, 2026. The evidence comes entirely from SERP tracking tools (SEMrush Sensor above 7, MozCast at 116°F) and community-reported impacts across dozens of sites.

Is the January 21 volatility the same as the January 6-7 volatility?

No, they appear to be separate events. The January 6-7 volatility was widely interpreted as aftershocks from the December 2025 core update. The January 21 spike showed distinctly different targeting patterns, specifically hitting self-promotional listicles and thin review content.

What content was most affected by this update?

Self-promotional “best X” listicles where the publisher ranks their own product first took the heaviest losses, with 29% to 49% visibility drops. Thin review content, AI-generated content clusters, and programmatic informational pages were also affected.

How long does recovery take?

Months, not days. Affected sites continued declining for weeks after the initial hit. Recovery from quality-system adjustments historically requires substantial content overhaul and tends to coincide with major reprocessing events.

Does this update affect AI search citations?

Yes. Sites losing organic rankings also lose presence in AI Overviews and GEO interfaces, making the total impact significantly larger than traditional traffic metrics reveal.

Is this a reviews system update or a core update?

No agreed-upon label exists. The behavior resembles reviews-system-style adjustments. Glenn Gabe suggests it may be part of continuous quality recalibration across helpful content, reviews system, and spam classifiers simultaneously.